This document is also available in PDF format and in other languages: español.

Colophon

Suggested citation

Chapman AD & Wieczorek JR (2020) Georeferencing Best Practices. Copenhagen: GBIF Secretariat. https://doi.org/10.15468/doc-gg7h-s853

Licence

The document Georeferencing Best Practices is licensed under Creative Commons Attribution-ShareAlike 4.0 Unported License.

Abstract

Georeferencing Best Practices provides guidelines to the best practices for georeferencing. Though targeted specifically at biological occurrence data, the concepts and methods presented here may be just as useful in other disciplines.

Document control

v1.2.1, 10 July 2025

Originally based on an earlier publication, Chapman AD & Wieczorek JR (2006) Guide to Best Practices for Georeferencing. Copenhagen: GBIF Secretariat. https://doi.org/10.15468/doc-2zpf-zf42

Disclaimer

The information in this book represents the professional opinion of the authors, and does not necessarily represent the views of the publisher. While the authors and the publisher have attempted to make this book as accurate and as thorough as possible, the information contained herein is provided on an "As Is" basis, and without any warranties with respect to its accuracy or completeness. The authors and the publisher shall have no liability to any person or entity for any loss or damage caused by using the information provided in this book.

Where there are differences in interpretation between this document and translated versions in languages other than English, the English version remains the original and definitive version.

1. Introduction

| A Location that is poorly georeferenced obscures the information upon which a georeference should be based, potentially making the originally provided information irrecoverable. The resulting georeferences can be misleading to users and lead to errors in research outputs. Thus, an important take-home message is, "To georeference poorly is worse than not to georeference at all." |

Throughout this document we make use of terminology for which the specific meaning has great importance for overall understanding, especially for terms that might differ from, or vary in common usage. We mark these key words like this, with a link to the glossary, to call attention to the specific meaning and avoid potential confusion. All terms so marked are defined in the Glossary.

This publication provides guidelines to the best practice for georeferencing. Though it is targeted specifically at biological occurrence data, the concepts and methods presented here can be applied in other disciplines where spatial interpretation of location is of interest. This document builds on the original Guide to Best Practices for Georeferencing (Chapman & Wieczorek 2006), which was one of the outputs from the BioGeomancer project (Guralnick et al. 2006). Several earlier projects and organizations (e.g. MaNIS, MaPSTeDI, INRAM, GEOLocate, NatureServe, CRIA, ERIN, CONABIO) had previously developed guidelines and tools for georeferencing, and these provided a good starting point for such a document. A detailed history of the organizations involved in the development of BioGeomancer and of the original Guide was given in that source. Throughout this document we reference tools and methodologies developed by those organizations and we acknowledge the valuable work by those organizations in their development. This document attempts to bring best practices up to date with terms, technologies, and georeferencing recommendations that have been developed and refined since the original document was published.

This document is designed so that institutions with georeferencing commitments can extract those portions that apply to their own requirements and priorities, and adapt them if necessary where those practices vary from, or elaborate on, the ones given here. Derived works should be made publicly accessible and the derived georeferencing protocol should be cited in the metadata of any georeferenced records that were produced by it. Citing a published protocol when your own methods differ would violate the best practice principle of replicability, described in §1.5. An example of a citable protocol is the Georeferencing Quick Reference Guide (Zermoglio et al. 2020). This document should not be cited as a georeferencing protocol.

This version is a complete revision with many new and updated references. The major changes and additions in this edition include:

-

Redefined the term extent to conform with general English and common technical usage to mean a distance, area or volume within defined boundaries and added the term radial to cover the sense of the term "extent" in previous documents (Wieczorek 2001, Wieczorek et al. 2004, Chapman & Wieczorek 2006, Wieczorek et al. 2012a, Wieczorek & Bloom 2015).

-

Introduced the concept of the corrected center to replace geographic center wherever that was used in the past. This is an important change because the geographic center did not necessarily yield the correct minimum uncertainty due to the extent of a feature, the corrected center does.

-

Expanded the sections on elevation and updated them to provide the most recent information on recording elevation and of determining uncertainty due to accuracy of GPSs and DEMs.

-

Expanded information on GPS satellites to include information on the other GNSS satellite systems.

-

Added information on the use of smartphones and cameras to record GPS locations and elevations.

-

Elaborated on the shape georeferencing method, including steps to refine the point-radius georeferencing method.

-

Expanded the explanations to include ecological, marine and other data collected in transects, along irregular paths, in polygons, or on grids.

-

Added information on determining georeferences for subterranean locations such as caves, tunnels and mine sites.

-

Added information on bathymetry and underwater depths.

-

Integrated this document with the companion documents Georeferencing Quick Reference Guide (Zermoglio et al. 2020) and Georeferencing Calculator Manual (Bloom et al. 2020).

1.1. Objectives

This document aims to provide current best practice for using the point-radius, bounding box, and shape georeferencing methods, whether for new records in the field or for retrospective georeferencing of historic and un-georeferenced locations. We hope that the reader will come away from this document with, if nothing else, a good appreciation of the following essential principles:

-

No matter how specific a location might seem, every location has an associated uncertainty, and this uncertainty determines the conditions under which the spatial interpretation of the location can be used. Based on this, coordinates without a carefully determined uncertainty should not be considered a georeference, they should be considered coordinates whose meaning is not clear.

-

Interpretations of locations are fraught with a wide variety of sources of uncertainty, and these are not always trivial to aggregate into an overall uncertainty.

-

The best locality description is one that is specific, concise, complete, and unambiguous; the more detailed the description, the better the chance of getting a georeference that is reproducible and that minimizes the uncertainty.

-

Coordinate precision is not the same as coordinate uncertainty, it is just one of many factors contributing to the overall uncertainty.

-

Users of georeferences should take into account the uncertainty (or lack of its documentation) when choosing data for analyses, as without that information, analyses may be skewed and results biased or altogether wrong.

-

Coordinates alone can not generally be checked for consistency; a locality description provides a confirmation that coordinates are correct.

-

Coordinates alone do not unambiguously define a location, because:

-

coordinates require a coordinate reference system to anchor the coordinates in relation to the Earth

-

no location is a point; even the most specific locations have an extent

-

any device that provides coordinates has an associated uncertainty

Conclusion: coordinates alone do not constitute a georeference.

-

-

Geometries are great as georeferences, if you can deal with them; if not, the point-radius is surprisingly powerful, because using a Geographic Information System (GIS), it can be intersected with other spatial layers to get geometries (shapes).

1.2. Target Audience

This work is designed for those who need, or want to know why the best practices are what they are, in detail. This document is also for individuals or organizations faced with planning a georeferencing project by providing a series of questions that suggests particular subsets of the best practices to follow.

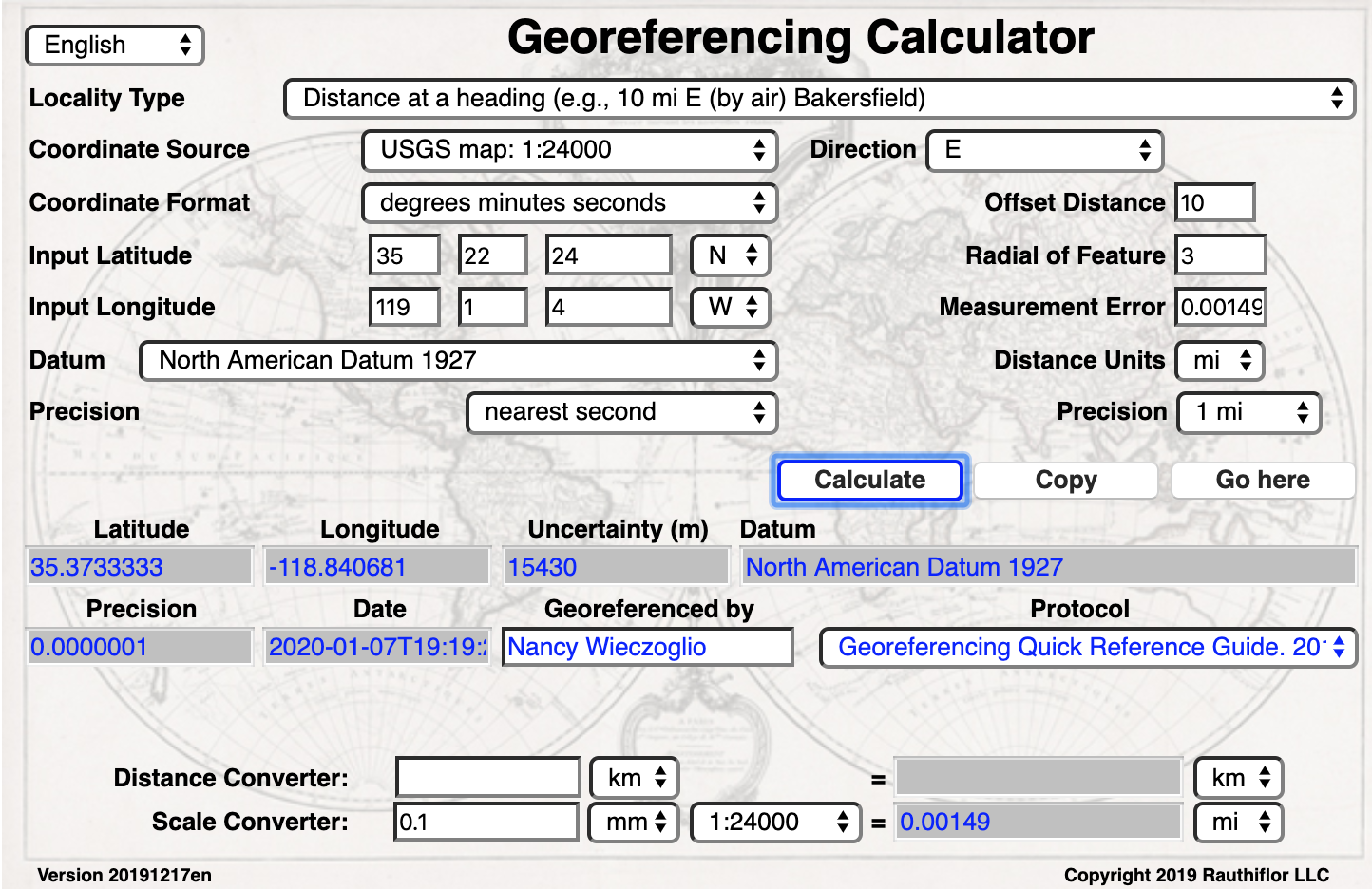

For those who just need to know how to put these practices into action while georeferencing, the Georeferencing Quick Reference Guide is the most suitable document to have at hand. The Quick Reference Guide refers to details in this document as needed and accompanies the Georeferencing Calculator, which is a tool to calculate coordinates and uncertainty following the methods described in this document.

Above all, this document will help data end users to understand the implications of trying to use records that have not undergone georeferencing best practices and the value of those that have.

1.3. Scope

This document is one of three that cover recommended requirements and methods to georeference locations. It is meant to cover the theoretical aspects (how to, and why) of spatially enabling information about the location of biodiversity-related phenomena, including special consideration for ecological and marine data. It also covers approaches to large-scale and collaborative georeferencing projects.

These documents DO NOT provide guidance on georectifying images or geocoding street addresses.

The accompanying Georeferencing Quick Reference Guide provides a practical how-to guide for putting the theory into practice, especially for the point-radius georeferencing method. The Quick Reference Guide relies on this document for background, definitions, more detailed explanations, while it describes exactly how to deal with a wide variety of specific cases (see §3.4.8).

The Georeferencing Calculator is a browser-based JavaScript application that aids in georeferencing descriptive localities and provides methods to help obtain geographic coordinates and uncertainties for locations (see §3.4.9).

1.4. Constraints

Constraints to using this document may arise because of:

-

Specimens with labels that are hard to read or decipher.

-

Records that don’t contain sufficient information.

-

Records that contain conflicting information.

-

Historic localities that are hard to find on current maps.

-

Locality names that have changed through time.

-

Marine locations from old ships' logs.

-

Lack of information on datums and/or coordinate reference systems.

-

Data Management Systems that don’t allow for recording or storage of the required georeferencing information.

-

Poor or no internet facilities.

-

Lack of access to suitable resources (maps, reliable gazetteers, etc.).

-

Lack of institutional/supervisor support.

-

Lack of training.

1.5. Principles of Best Practice

The following are principles of best practice that should be applied to georeferencing:

-

Accuracy – a measure of how well the data represent the truth, for example, how well is the true location of the target of an observation, collecting, or sampling event represented in a georeference. This includes considerations taken both at the moment when the location was recorded and when it was georeferenced. Note that careless lack of precision will have an adverse effect on accuracy (see §1.6).

-

Effectiveness – the likelihood that a work program achieves its desired objectives. For example, the percentage of records for which the coordinates and uncertainty can be accurately identified and calculated (see §6.8).

-

Efficiency – the relative effort needed to produce an acceptable output, including the effort to assemble and use external input data (e.g. gazetteers, collectors’ itineraries, etc.).

-

Reliability – the relative confidence in the repeatability or consistency with which information was produced and recorded. The reliability of sources and methods that can affect the accuracy of the results.

-

Accessibility – the relative ease with which users can find and use information in all of the senses supported by FAIR principles (Wilkinson et al. 2016) of data being Findable, Accessible, Interoperable, and Reusable.

-

Transparency – the relative clarity and completeness of the inputs and processes that produced a result. For example, the quality of the metadata and documentation of the methodology by which a georeference was obtained.

-

Timeliness – relates to the frequency of data collection, its reporting and updates. For example, how often are gazetteers updated, how long after georeferencing are the records made available to others, and how regularly are updates/corrections made following feedback.

-

Relevance – the relative pertinence and usability of the data to meet the needs of potential users in the sense of the principle of "fitness for use" (Chapman 2005a). Relevance is affected by the format of the output and whether the documentation and metadata are accessible to the user.

-

Replicability – the relative potential for a result to be reproduced. For example, a georeference following best practices would have sufficient documentation to be repeated using the same inputs and methods.

-

Adaptability – the potential for data to be reused under changing circumstances or for new purposes. For example, georeferences following best practices would have sufficient documentation to be used in analyses for which they were not originally intended.

In addition, an effective best practices document should:

-

Align the vision, mission, and strategic plans in an institution to its policies and procedures and gain the support of sponsors and/or top management.

-

Use a standard method of writing (writing format) to produce professional policies and procedures.

-

Satisfy industry standards.

-

Satisfy the scrutiny of management and external/internal auditors.

-

Adhere to relevant standards and biodiversity informatics practices.

1.6. Accuracy, Error, Bias, Precision, False Precision, and Uncertainty

There is often confusion around what is meant by accuracy, error, bias, precision, false precision, and uncertainty. In addition to the following paragraphs, refer to the definitions in the Glossary and Chapman 2005a. All of these concepts are relevant to measurements.

Accuracy, error, and bias all relate directly to estimates of true values. The closer a statement (e.g. a measurement) is to the true value, the more accurate it is. Error is a measure of accuracy–the difference between an estimated value and the true value. The more accurate an estimate, the smaller the error. Bias is a measurement of the average systematic error in a set of measurements. Bias often indicates a calibration or other systematic problem, and can be used to remove systematic errors from measurements, thus making them more accurate.

Because the true value is not known, but only estimated, the accuracy of the measured quantity is also unknown. Therefore, accuracy of coordinate information can only be estimated.

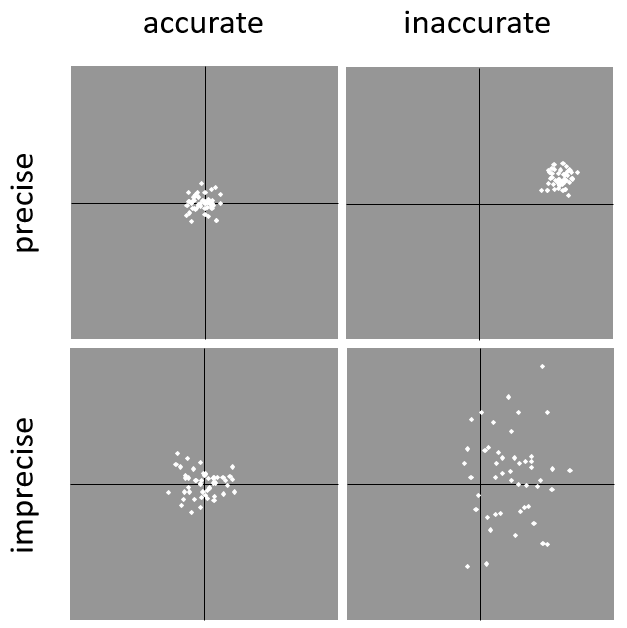

Whereas error is an estimate of the difference between a measured value and the truth, precision is a measurement of the consistency of repeated measurements to each other. Precision is not the same as accuracy (see Figure 1) because measurements can be consistently wrong (have the same error). Precise measurements of the same target will give similar results, accurate or not. We quantify precision as how specific a measurement should be to give consistent results. For example, a measuring device might give measurements to five decimal places (e.g. 3.14159), while repeated measurements of the same target with the same device are only consistent to four decimal places (e.g. 3.1416). We would say the precision is 0.0001 in the units of the measurement.

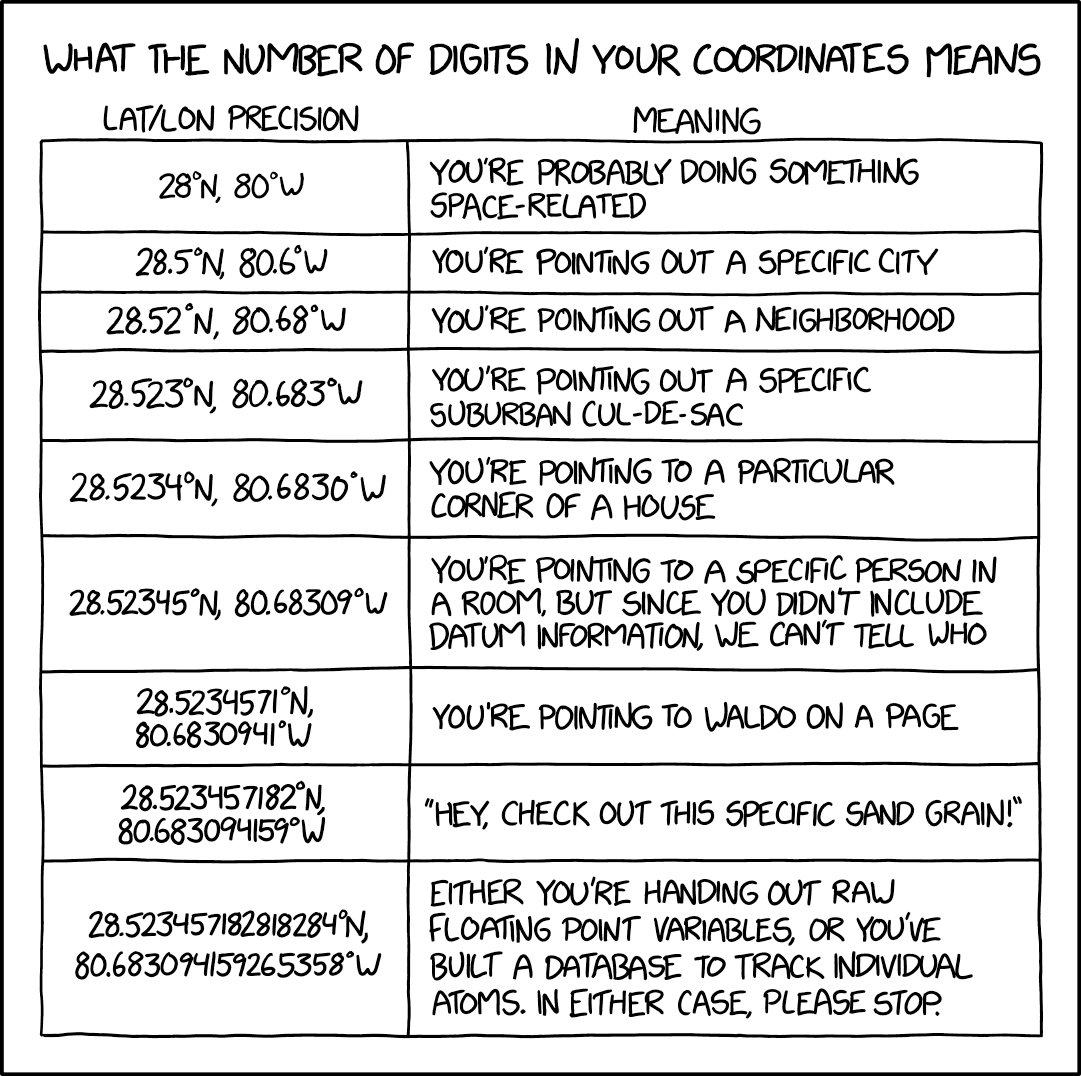

False precision refers to recorded values that have precision that is unwarranted by the original measurement. This is often an artefact of how data are stored, calculated, represented, or displayed. For example, a user interface might be designed to always display coordinates with five decimal places (e.g. 3.00000), demonstrating false precision for any coordinate that was not precise (e.g. 3°, a latitude given only to the nearest degree). Because false precision can be undetectable, the actual precision of a measurement is something that should be captured explicitly rather than inferred from the representation of a value. This is particularly true for coordinates, which can suffer from false precision as a result of a format transformation. For example, 3°20’ has a precision of one minute, equivalent to about 0.0166667 degrees, but when stored as decimal degrees where five decimal places are retained and displayed the value would be 3.33333, with a false precision of 0.00001 degrees. Also see Figure 2.

Like error, uncertainty is a measure of how different an unknown true value might be from a value given. In georeferencing, we use uncertainty to refer to the maximum distance from a center coordinate of a georeference to the furthest point where the true location might be–a combination of all the possible sources of error given as a distance.

1.7. Software and Online Tools

Software and tools come and go and are regularly updated, so rather than include a list in this document, we refer readers to georeferencing.org.

1.8. Conformance to Standards

Throughout this document, we have, where possible, recommended practices that conform to appropriate geographic information standards and standards for the transfer of biological and geographic information. These include standards developed by the Open Geospatial Consortium (OGC 2019), the Technical Committee for digital geographic information and geomatics (ISO/TC 211), and Biodiversity Information Standards (TDWG). Also, this document supports the FAIR principles of data management in recommending that well-georeferenced data are Findable, Accessible, Interoperable, and Reusable.

1.9. Persistent Identifiers (PIDs)

The use of Persistent Identifiers (PIDs) including Globally Unique Identifiers (GUIDs), Digital Object Identifiers (DOIs) etc. for uniquely identifying individual objects and other classes of data (such as collections, observations, images, and locations) are under discussion. It is important that any identifiers used are globally unique (applied to exactly one instance of an identifiable object), persistent, and resolvable (Page 2009, Richards 2010, Richards et al. 2011). As yet, very few institutions use PIDs for specimens, and even fewer for locations, however a recent paper by Nelson et al. 2018 makes a number of recommendations on minting, managing and sharing GUIDs for herbarium specimens. We recommend that once a stable system for assigning and using PIDs is implemented, it be used wherever practical, including for locations.

2. Elements for Describing a Location

In this section we discuss best practices for capturing and recording information so that it can be georeferenced and shared in the most productive and efficient way, following standard guidelines and methodologies. This will lead to improved consistency in recording, sharing, and use of data.

Collecting data in the field sets the stage for good georeferencing procedures (Museum of Vertebrate Zoology 2006). Many techniques now exist that can lead to well documented georeferenced locations. It is important, however, that the locations be recorded correctly in order to reduce the likelihood of error. We recommend that all new collecting events use a GPS for recording coordinates wherever possible, and that the GPS be set to a relevant datum or coordinate reference system (see §2.5). There are many issues that need to be considered when collecting data in the field and in this section we provide recommendations for best practice.

2.1. The Importance of Good Locality Data

When recording data in the field, whether from a map or when using a GPS, it is important to record descriptive locality information as an independent validation of a georeference. The extent to which validation can occur depends on how well the locality description and its spatial counterpart describe the same place. The highest quality locality description is one contributing the least amount of uncertainty possible. This is equally important for retrospective georeferencing, where locality descriptions are given and georeferences are not, and for georeferencing in the field.

2.2. Localities

Provide a descriptive locality, even if you have coordinates. The locality should be as specific, succinct, unambiguous, complete, and as accurate as possible, leaving no room for multiple interpretations.

Features used as reference points should be stable, i.e. places (permanent landmarks, trig points, etc.) that will remain unchanged for a long time after the event is recorded. Do NOT use temporary features or waypoints as the key reference locality.

To facilitate the validation of a locality, use reference features that are easy to find on maps or in gazetteers. At all costs, avoid using vague terms such as "near" and "center of" or providing only an offset without a distance, such as "West of Jiuquan", or worse "W Jiuquan".

In any locality that contains a feature that can be confused with another feature of a different type, specify the feature type in parentheses following the feature name, for example, "Clear Lake (populated place)".

If recording locations on a path (road, river, etc.), it is important to also record whether the distances were measured along the actual path (e.g. ‘by road’) or as a direct line from the origin (e.g. ‘by air’).

| The most specific localities are those described by a) a distance and heading along a path from a nearby and well-defined intersection, or b) two cardinal offset distances from a single persistent nearby feature of small extent. |

| It is good practice not to use quotation marks ("") in locality descriptions as this can generate problems down the line if using open text files, spread sheets, etc. |

By describing a location in terms of a distance along a path, or by two orthogonal distances from a feature, one removes uncertainty due to imprecise headings, which, when the distances are great, can be the biggest contributing factor to overall uncertainty. Choosing a reference feature with small extent reduces the uncertainty due to the size of the reference feature, and by choosing a nearby reference feature, one reduces the potential for error in measuring the offset distances, especially along paths. The Museum of Vertebrate Zoology at the University of California, Berkeley has published a guide to recording good localities in the field that follows these principles. Following is an example locality from that document (copied with permission).

Locality: Modoc National Wildlife Refuge, 2.8 mi S and 1.2 mi E junction of Hwy. 299 and Hwy. 395 in Alturas, Modoc Co., Calif.

Lat/Long/Datum: 41.45063, −120.50763 (WGS84)

Elevation: 1330 ft

GPS Accuracy: 24 ft

Radial: 150 ft

References: Garmin Etrex Summit GPS for coordinates and accuracy, barometric altimeter for elevation.

When recording a location that does not have a feature that can be easily referenced, for example a

2.3. Extent of a Location

The extent of a location is the totality of the space it occupies. The extent is a simple way to alert the user that, for example, all of the specimens collected or observations made at the stated coordinates were actually within an area of up to 0.5 kilometers from that point. It can be quite helpful at times to include in your field notes a large-scale (highly detailed) map of the local vicinity for each locality, marking the area in which events actually occurred.

The extent may be a linear distance, an area or a volume represented by one or more buffered points (i.e. a point-radius), buffered lines (e.g. transects, stratigraphic sections), polygons, or other geometries in two or three dimensions (sphere, cube, etc.).

A location can be anchored to a position (as coordinates, potentially in combination with elevation, depth and distance above surface) within the extent. This may be the corner or center of a grid, the center of a polygon, the center of a circle, etc.

The geographic extent is the space occupied by the location when projected onto a 2D coordinate reference system in geographic coordinates (e.g. latitude and longitude in decimal degrees in WGS84 datum on Google Maps). The geographic radial is the line segment from the corrected center of the location to the furthest point on the boundary of the geographic extent of that location. This simplified representation may be convenient for many uses, as long as the references to the extent are not lost. With the coordinates alone, the nature of the extent and the variety of conditions found therein will be lost, thus sacrificing the utility of the spatial information about the location and the contexts in which the data can be used.

When recording observations, whether by people or from fixed recording instruments such as camera traps (Cadman & González-Talaván 2014), sound recorders, etc., the extent should include the effective field of view (for camera traps) or area of detectable signals covered by the sound recorders, etc. In these cases the most faithful representation of the location (the one that would allow for the least maximum uncertainty distance) should have the coordinates at the center of the extent of the field of detection, not at the position of the recording device or person. The true location may need to be calculated from the coordinates of the device using the radial and point-radius georeferencing method. If the position of the device or person is the only practical way to give the coordinates, then the radial for the location is the length of the furthest distance in the field of detection.

For

2.3.1. Transects

If the locality is recorded as the center of the transect and half the length of the transect is then used to describe uncertainty, information about the orientation of the transect is lost, and the description essentially becomes equivalent to a circle.

2.3.2. Paths

Not all linear-based locations are transects or straight lines. We use the term path to highlight this broader concept. Illustrative examples are: ad-hoc observations while walking along a trail, an inventory or count of species while travelling along a river, tracking an individual animal’s movements.

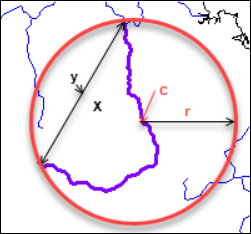

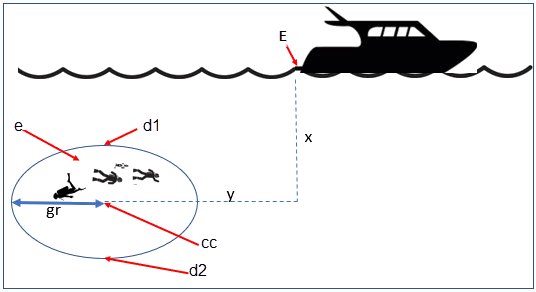

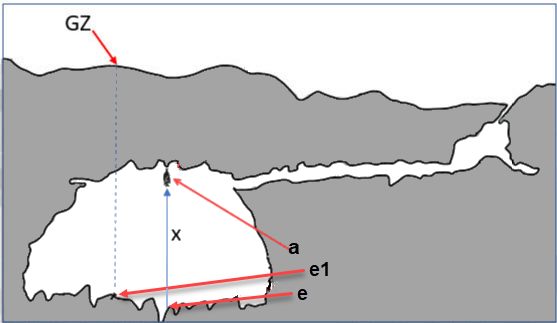

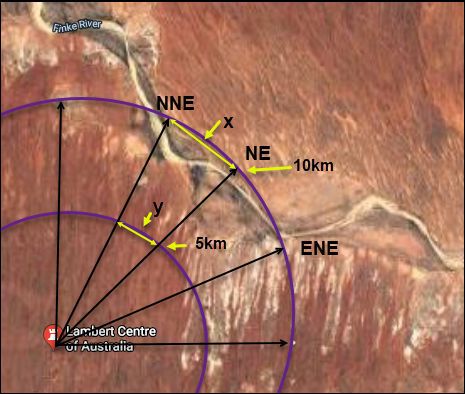

To determine the uncertainty of a described path using the point-radius georeferencing method, one needs to determine the corrected center – i.e. the point on the path that describes the smallest enclosing circle that includes the totality of the path ("c" on Figure 3). This is very seldom the same place as the center of a line joining the two ends of the path ("y" on Figure 3), nor the center of the extremes of latitude and longitude (the geographic center) of the path ("x" on Figure 3).

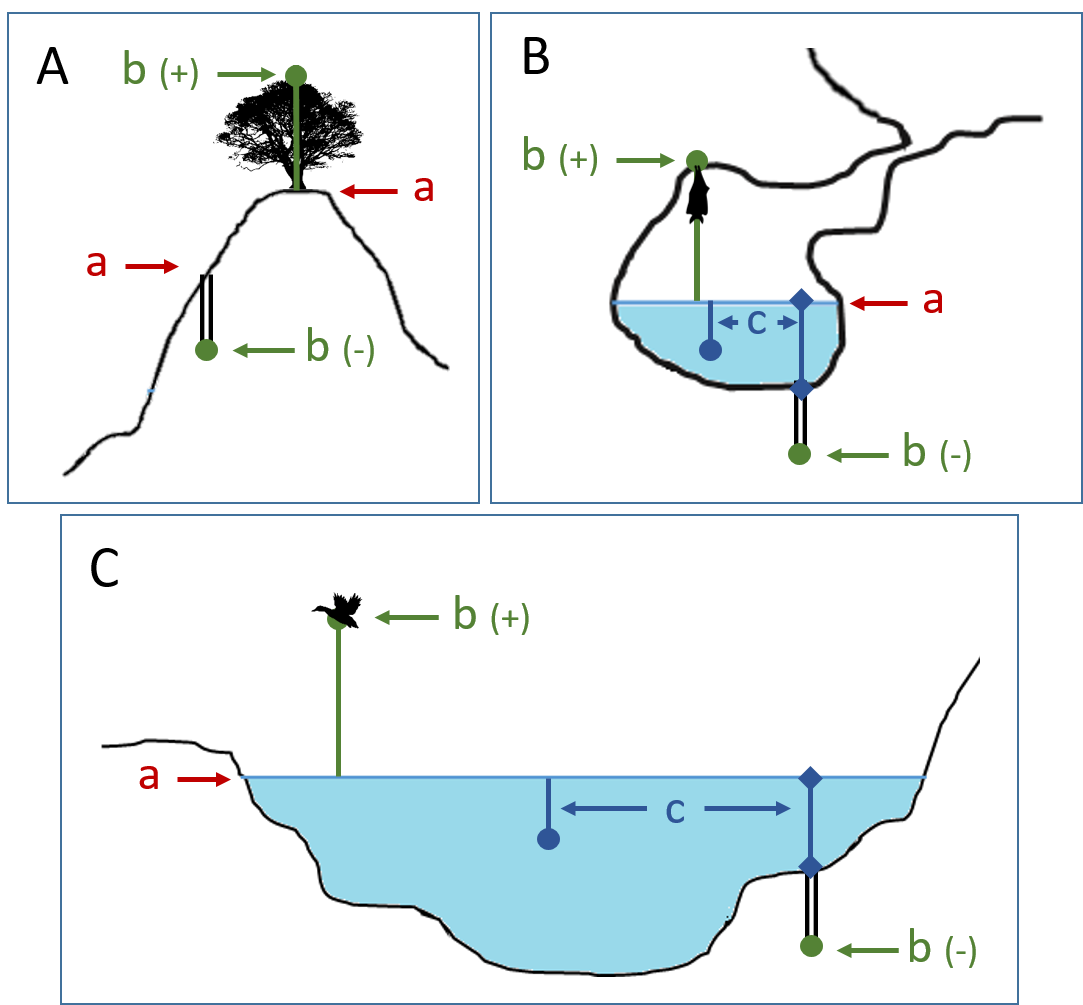

2.3.3. Polygons

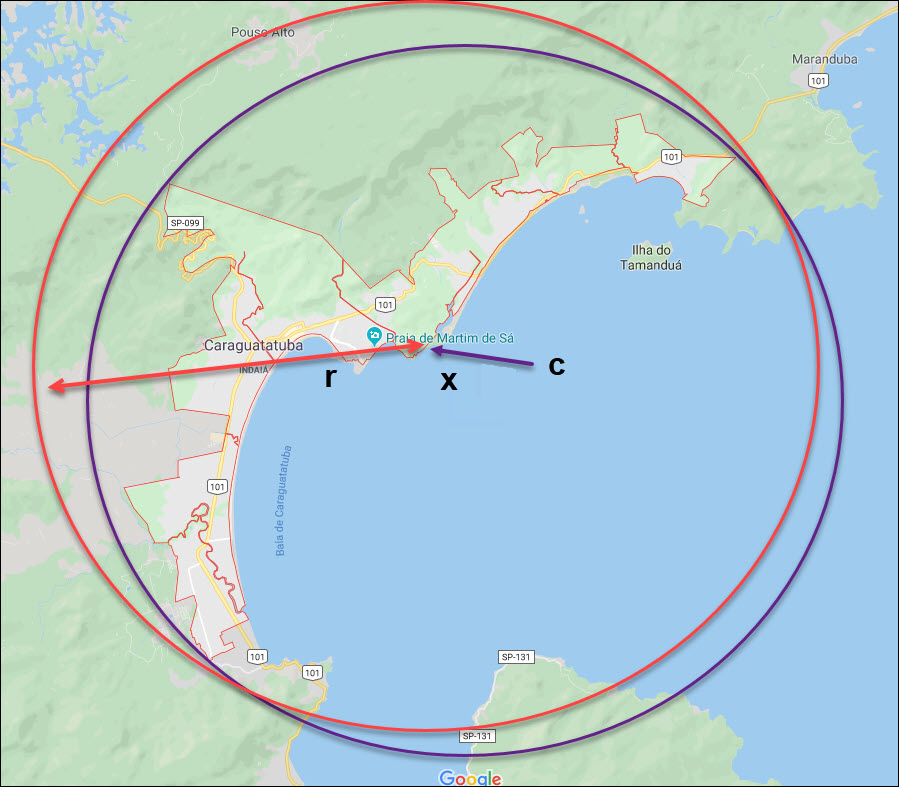

When collecting or recording data from an area, for example, bird counts on a lake, a set of nesting or roosting sites on an offshore coral cay, or a buffered transect – the location is best recorded as a polygon. Polygons can be stored using the Darwin Core (Wieczorek et al. 2012b) field called dwc:footprintWKT, in which a geometry can be stored in the Well-Known Text format (ISO 2016). For the point-radius georeferencing method, if the polygon has a concave shape (for example a crescent), the center may not actually fall within the polygon (Figure 4). In that case, the corrected center on the boundary of the polygon is used for the coordinates of the location and the geographic radial is measured from that point to the furthest extremity of the polygon. Note that the circle based on the corrected center (red circle in Figure 4) will always be greater than the circle based on the geographic center (black circle in Figure 4).

Complex polygons, such as donuts, self-intersecting polygons and multipolygons create even more problems, in both documentation and storage.

2.3.4. Grids

Grids may be based on the lines of latitude and longitude, or they may be cells in a Cartesian coordinate system based on distances from a reference point. Usually grids are aligned North-South, and if not, their magnetic declination is essential to record. If the extent of a location is a grid cell, then the ideal way to record it would be the polygon consisting of the corners of the grid (i.e. a bounding box). The point-radius method can be used to capture the coordinates of the grid cell center and the distance from there to one of the furthest corners, but given that the geometries for grid cells are so simple, it is best to also capture them as polygons. Often grid cells (e.g. geographic grids) are described using the coordinates of the southwest corner of the grid. Using the southwest corner as the coordinates for a point-radius georeference is wasteful, since the geographic radial would be from there to the farthest corner, which would be twice as far as it would be if the center of the grid cell was used instead. In any case, the characteristics of the grid should be recorded with the locality information.

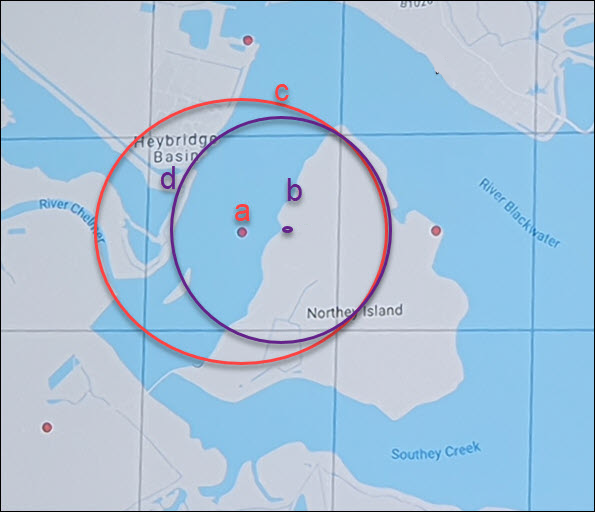

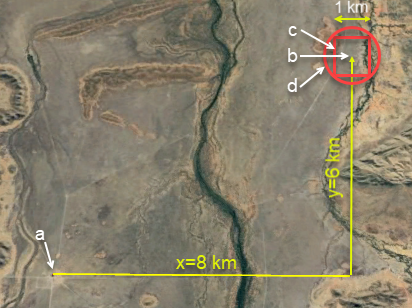

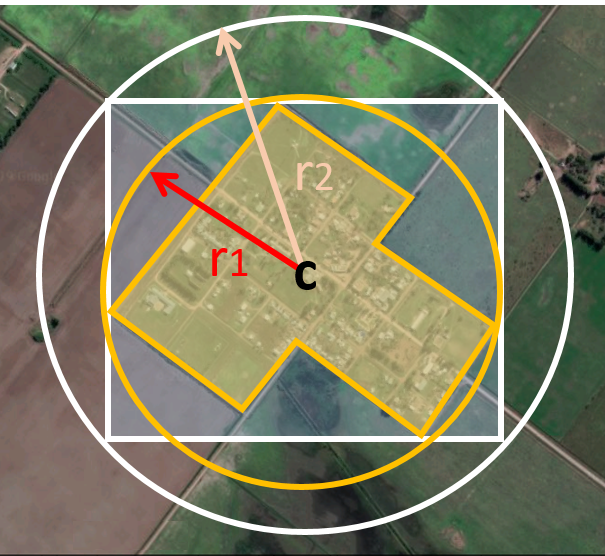

It is important when converting gridded data to geographic coordinates to also check the locality description. Locality information may allow you to refine the location as in Figure 5 where just having the grids without the locality information (i.e. "on Northey Island") would lead to the circle (c) with its center (a) at the center of the grid. Knowing that the record is on Northey Island, however, allows you to refine the location to the smaller circle (d) with its center at (b). Note that other criteria (such as a change of datum, map scale, etc.) may add to the uncertainty.

Township, Range and Section and Equivalents

Township, Range and Section (TRS) or Public Land Survey System (PLSS) is a grid-like way of dividing land into townships in the midwestern and western USA. Sections are usually one mile on each side and townships usually consist of 36 sections arranged in a grid with a specific numbering system. Not all townships are square, however, as there may be irregularities based on administrative boundaries, for example. For this reason, though these systems resemble grids, they are best treated as individual polygons. Similar subdivisions are used in other countries

Quarter Degree Grid Cells

Quarter Degree Grid Cells (QDGC) (Larsen et al. 2009, Larsen 2012) and their predecessor Quarter Degree Squares (QDS) have been used in many historical African biodiversity atlas projects and continue to be used for current South African biodiversity projects such as the Atlas of South African birds (Harrison et al. 1997). It has also been recommended as the method to use for generalizing sensitive biodiversity data in South Africa (SANBI 2016, Chapman 2020).

The QDGC method for recording grids follows an earlier system (QDS) that was developed for South Africa, and only worked in southern Africa, i.e. in the southern and eastern hemispheres. Larsen et al. (2009) provided an extension to the QDS standard that allowed the methodology to be used across the world in all hemispheres. To do this, they adapted the reference system to address locations independent of the hemisphere. This required the addition of characters delimiting north/south and east/west positions. An extra Zero was added to east/west references to pad to three characters. They changed the reference position for the grids to that which is closest to the Prime Meridian and the Equator (i.e., in northern hemisphere Africa, for example to the bottom left, while retaining the top left for southern Africa). The code is designated by:

-

A symbol denoting the eastern or western hemispheres (E or W).

-

The three-digit degree of longitude.

-

A symbol denoting the northern or southern hemisphere (N or S).

-

The two digit degree of latitude.

-

Each degree square is then divided into sixteen quarter-degree squares, each 15’ x 15’. These are given two additional letters as indicated. Thus in Figure 6, the green square is represented by the code E032S18CB.

The earlier Quarter Degree Square (QDS) methodology references this square as 3218CB. The QDS methodology is still commonly used in southern Africa, but is easily translatable to the QDGC system.

2.3.5. Three-Dimensional Shapes

Most terrestrial locations are recorded with reference to the terrestrial surface as geographic coordinates, sometimes with elevation. Some types of

2.4. Coordinates

Whenever practical, provide the coordinates of the location where an event actually occurred (see §2.3) and accompany these with the coordinate reference system of the coordinate source (map or GPS). The two coordinate systems most commonly used by biologists are based on geographic coordinates (i.e. latitude and longitude) or Universal Transverse Mercator (UTM) (i.e. easting, northing and UTM zone).

A datum is an essential part of a coordinate reference system and provides the frame of reference. Without it the coordinates are ambiguous. When using both maps and GPS in the field, set the coordinate reference system or datum of the GPS or GNSS receiver to be the same as that of the map so that the GPS coordinates for a location will match those on the map. Be sure to record the coordinate reference system or datum used.

2.4.1. Geographic Coordinates

Geographic coordinates are a convenient way to define a location in a way that is not only more specific than is otherwise possible with a locality description, but also readily allows calculations to be made in a GIS. Geographic coordinates can be expressed in a number of different coordinate formats (decimal degrees, degrees minutes seconds, degrees decimal minutes), with decimal degrees being the most commonly used. Geographic coordinates in decimal degrees are convenient for georeferencing because this succinct format has global applicability and relies on just three attributes, one for latitude, one for longitude, and one for the geodetic datum or ellipsoid, which, together with the coordinate format, make up the coordinate reference system. By keeping the number of recorded attributes to a minimum, the chances for transcription errors are minimized (Wieczorek et al. 2004).

When capturing geographic coordinates, always include as many decimals of precision as given by the coordinate source. Coordinates in decimal degrees given to five decimal places are more precise than a measurement in degrees-minutes-seconds to the nearest second, and more precise than a measurement in degrees and decimal minutes given to three decimal places (see Table 3). Some new GPS/GNSS receivers now display data in decimal seconds to two decimal places, which corresponds to less than a meter everywhere on Earth. This doesn’t mean that the GPS reading is accurate at that scale, only that the coordinates as given do not contribute additional uncertainty.

| Decimal degrees are preferred when capturing coordinates from a GPS, however, where reference to maps is important, and where the GPS receiver allows, set the recorder to report in degrees, minutes, and decimal seconds. |

2.4.2. Universal Transverse Mercator (UTM) Coordinates

Universal Transverse Mercator (UTM) is a system for assigning distance-based coordinates using a Mercator projection from an idealized ellipsoid of the surface of the Earth onto a plane. In most applications of the UTM system, the Earth is divided into a series of six-degree wide longitudinal zones extending between 80°S and 84°N and numbered from 1-60 beginning with the zone at the Antimeridian (Snyder 1987). Because of the latitudinal limitation in extent, UTM coordinates are not usable in the extreme polar regions of the Earth. A map of UTM zones can be found at UTM Grid Zones of the World (Morton 2006).

UTM coordinates consist of a zone number, a hemisphere indicator (N or S), and easting and northing coordinate pairs separated by a space with 6 and 7 digits respectively, and all in the order given here. For example, for Big Ben in London (latitude 51.500721, longitude −0.124430), the UTM reference would be: 30N 699582 5709431.

Latitude bands are not officially part of UTM, but are used in the Military Grid Reference System (MGRS). They are used in many applications, including in Google Earth. Each zone is subdivided into 20 latitudinal bands, with letters used from South to North starting with "C" at 80°S to "X" (stretched by an extra 4 degrees) at 72°N (to 84°N) and omitting "O". All letters below "N" are in the southern hemisphere, "N" and above are in the northern hemisphere. When using latitudinal bands, "north" and "south" need to be spelled out to avoid confusion with the latitudinal bands of "N" and "S" respectively. Using the latitudinal band method, the coordinates for Big Ben would be: 30T 699582m east 5709431m north.

National and local grid systems derived from UTM, but which may be based on different ellipsoids and datums, are basically used in the same way as UTMs. For example, the Map Grid of Australia (MGA2020) uses UTM with the GRS80 ellipsoid and Geocentric Datum of Australia (GDA2020) (Geoscience Australia 2019b). An example of a location in MGA2020 is "MGA Zone 56, x: 301545 y: 7011991"

When recording a location, or databasing using UTM or equivalent coordinates, a zone should ALWAYS be included; otherwise the data are of little or no value when used outside that zone, and certainly of little use when combined with data from other zones. Zones are often not reported where a region (e.g. Tasmania) falls completely within one UTM zone. This is OK while the database remains regional, but is not suitable for exchange outside of the zone. When exporting data from databases like these, the region’s zone should be added prior to export or transfer. Better still, modify the database so that the zone remains with the coordinates.

Note that Darwin Core (Wieczorek et al. 2012b) supports UTM coordinates only in the verbatimCoordinates field. There are several tools to convert UTM coordinates to geographic coordinates, including Geographic/UTM Coordinate Converter (Taylor 2003)–see Georeferencing Tools. For details on georeferencing, see Coordinates – Universal Transverse Mercator (UTM) in Georeferencing Quick Reference Guide (Zermoglio et al. 2020).

| If using UTM coordinates, always record the UTM zone and the datum or coordinate reference system. |

2.5. Coordinate Reference System

Except under special circumstances (the poles, for example), coordinates without a coordinate reference system do not uniquely specify a location. Confusion about the coordinate reference system can result in positional errors of hundreds of meters. Positional shifts between what is recorded on some maps and WGS84, for example, may be between zero and 5359 m (Wieczorek 2019).

An unofficial (not governed by a standards body) set of EPSG (IOGP 2019) codes are often used (and misused) to designate datums. There are EPSG codes for a variety of entities (coordinate reference systems, areas of use, prime meridians, ellipsoids, etc.) in addition to datums, and the codes for these are often confused. For example, the code for the WGS84 coordinate reference system is EPSG:4326, while the code for the WGS84 datum is EPSG:6326 and the code for the WGS84 ellipsoid is EPSG:6422. The EPSG code has the advantage (when properly chosen) that it is explicit which type of entity it refers to, unlike the common name alone (e.g. "WGS84" alone could refer to the coordinate reference system, the datum, or the ellipsoid). Increasingly, GPS units are reporting coordinate reference systems as EPSG codes. Knowing the EPSG code for the coordinate reference system, one can determine the datum and ellipsoid for that system. It is thus recommended to record the EPSG code of the coordinate reference system if possible, otherwise, record the EPSG code of the datum if possible, otherwise, record the EPSG code of the ellipsoid. If none of these can be determined from the coordinate source, record "not recorded". This is important, as it determines the uncertainty due to an unknown datum (see §3.4.4) and has potentially drastic implications for the maximum uncertainty distance.

Sources of EPSG codes include epsg.io (Maptiler 2019), Apache 2019, EPSG Dataset v9.1 (IOGP 2019) and Geomatic Solutions 2018. When using a GPS, it is important to set and record the EPSG code of the coordinate reference system or datum. See discussion below under §3.4.

| If you are not basing your locality description on a map, set your GPS to report coordinates using the WGS84 datum or a recent local datum that approximates WGS84 (that may, for example, be legislated for your country) or the appropriate Coordinate Reference System (EPSG Code). Record the datum used in all your documentation. |

2.6. Using a GPS

GPS (Global Positioning System) technology uses triangulation between a GPS/GNSS receiver and GPS or GNSS satellites (Kaplan & Hegarty 2006, Van Sickle 2015, Novatel 2015). As the GNSS satellites are at known positions in space, and the GPS/GNSS receiver can determine the distances to the detected satellites, the position on earth can be calculated. A minimum of four GNSS satellites is required to determine a position on the earth’s surface (McElroy et al. 2007, Van Sickle 2015). This is not generally a limitation today, as one can often receive signals from a large number of satellites (up to 20 or more in some areas). Note, however, that just because your GNSS receiver is showing lots of satellites, it doesn’t mean that all are being used as the receiver’s ability to make use of additional satellites may be limited by its computational power (Novatel 2015). In the past, many GPS units only referenced the GPS (USA) satellites of which there are currently 31 (April 2019), but now many GPS/GNSS receivers are designed to access systems from other countries as well – such as GLONASS (Russia), BeiDou-2 (China), Galileo (Europe), NAVIC (India), and QZSS (Japan), making a total of about 112 currently accessible satellites (2019) with a further 23 to be brought into operation over the next few years. This number is increasing rapidly every year (Braun 2019). Prior to the removal of Selective Availability in May 2000, the accuracy of handheld GPS receivers in the field was around 100 meters or worse (McElroy et al. 2007, Leick 1995). The removal of this signal degradation technique has greatly improved the accuracy that can now generally be expected from GPS receivers (GPS.gov 2018).

To obtain the best possible accuracy, the GPS/GNSS receiver must be located in an area that is free from overhead obstructions and reflective surfaces and have a good field of view to a broad portion of the sky (for example, they do not work very well under a heavy forest canopy, although new satellite signal technology is improving the accuracy in these locations (Moore 2017)). The GPS/GNSS receiver must be able to record signals from at least four GNSS satellites in a suitable geometric arrangement. The best arrangement is to have "one satellite directly overhead and the other three equally spaced around the horizon" (McElroy et al. 2007). The GPS/GNSS receiver must also be set to an appropriate datum or coordinate reference system (CRS) for the area, and the datum or CRS that was used must be recorded (Chapman 2005a).

| Set your GPS to report locations in decimal degrees rather than make a conversion from another coordinate system as it is usually more precise (see Table 3), better and easier to store, and saves later transformations, which may introduce error. |

| An alternative where reference to maps is important, and where the GPS receiver allows it, is to set the recorder to report in degrees, minutes, and decimal seconds. |

2.6.1. Choosing a GPS or GNSS Receiver

One of the most important issues for consideration when choosing a GPS or GNSS receiver is the antenna. An antenna behaves both as a spatial and frequency filter, therefore, selecting the right antenna is critical for optimizing performance (Novatel 2015). One of the drawbacks with smartphones, for example, is the limited size of the GNSS antenna.

For information on issues to consider when selecting an appropriate GNSS antenna and/or GPS receiver, we refer you to Chapter 2 in Novatel 2015 and Chapter 10 in NLWRA 2008.

2.6.2. GPS Accuracy

Most GPS devices are able to report a theoretical horizontal accuracy based on local conditions at the time of reading (atmospheric conditions, reflectance, forest cover, etc.). For highly specific locations, it may be possible for the potential error in the GPS reading to be on the same order of magnitude as the extent of the location. In these cases, the GPS accuracy can make a non-trivial contribution to the overall uncertainty of a georeference.

The latest US Government commitment (US Deptartment of Defense and GPS Navstar 2008) is to broadcast the GPS signal in space "with a global average user range error (URE) of ≤7.8 m (25.6 ft.), with 95% probability". In reality, actual performance exceeds this, and in May 2016, the global average URE was ≤ 0.715 m (2.3 ft), 95% of the time (GPS.gov 2017). Though it does not mean that all receivers can obtain that accuracy, the accuracy of GPS receivers has improved and today most manufacturers of handheld GPS units promise errors of less than 5 meters in open areas when using four or more satellites. The need for four or more satellites to achieve these accuracies is because of the inaccuracies in the clocks of the GPS receivers as opposed to the much more accurate satellite clocks (Novatel 2015). The accuracy can be improved by averaging the results of multiple observations at a single location (McElroy et al. 2007), and some modern GPS receivers that include averaging algorithms can bring the accuracy to around three meters or less. According to GISGeography 2019a, “A well-designed GPS receiver can achieve a horizontal accuracy of 3 meters or better and vertical accuracy of 5 meters or better 95% of the time. Augmented GPS systems can provide sub-meter accuracy”. Another method to improve accuracy is to average over more than one GPS unit. Note that some GPS/GNSS receivers can record up to 20 decimal places of precision, but that doesn’t mean that is the accuracy of the unit.

2.6.3. Differential GNSS

The use of Differential GNSS (DGNSS) (incorporating Differential GPS (DGPS)) can improve accuracy considerably. DGNSS references a GNSS Base Station (usually a survey control point) at a known position to calibrate the receiving GNSS signal. The Base Station and handheld GNSS receiver reference the satellites’ positions at the same time and thus reduces error due to atmospheric conditions, as well as (to a lesser extent) satellite ephemeris (orbital location) and clock error (Novatel 2015). The handheld GNSS instrument applies the appropriate corrections to the determined position. Depending on the quality of the receivers used, one can expect an accuracy of <1 meter (USGS 2017). This accuracy decreases as the distance of the receiver from the Base Station increases. It is important to note that differential technology is not available in all areas – for example, in remote locations and remote islands, and the resulting accuracy may be less than expected. Again, averaging can further improve on these values (McElroy et al. 2007). It is important to note, however, that most DGNSS is post-processed. Records are stored in the GPS/GNSS unit and then post-processing software is run to improve the measurements once connected to a computer. Post processing is not as commonly used since the introduction of real-time DGNSS, such as the Satellite Based Augmentation System, see the next subsection below), and is now used mostly in surveying applications where high accuracy is required.

DGNSS accuracies are susceptible to severe degradation due to enhanced ionospheric effects associated with geomagnetic storms. Degradation can be in the order of 2-30 times in some areas and depending on the severity of the storm.

2.6.4. Satellite Based Augmentation System

Satellite Based Augmentation System (SBAS) is a collection of geosynchronous satellites originally developed for precision guidance of aircraft (Federal Aviation Administration 2020) and more recently to provide services for improving the accuracy, integrity and availability of basic GNSS signals (Novatel 2015). SBAS receivers are inexpensive examples of real-time differential correction. SBAS uses a network of ground-based reference stations to measure small variations in the GNSS satellite signals. Measurements from the reference stations are routed to master stations, which queue the received Deviation Correction (DC) and send the correction messages to geostationary satellites. Those satellites broadcast the correction messages back to Earth, where SBAS-enabled GPS/GNSS receivers use the corrections while computing their positions to improve accuracy. Separate corrections are calculated for ionospheric delay, satellite timing, and satellite orbits (ephemerides), which allows error corrections to be processed separately, if appropriate, by the user application.

Wide Area Augmentation System

The first Satellite Based Augmentation System (SBAS) system was Wide Area Augmentation System (WAAS) (Wide Area Augmentation System), which was originally developed to provide improved GPS accuracy and a certified level of integrity to the US aviation industry, such as to enable aircraft to conduct precision approaches to airports and for coastal navigation. It was later expanded to cover Canada and Mexico, providing a consistent coverage over North America.

European Geostationary Navigation Overlay Service

The European Geostationary Navigation Overlay Service (EGNOS) was developed as an augmentation system that improves the accuracy of positions derived from GPS signals and alerts users about the reliability of the GPS signals. Originally developed using three geostationary satellites covering European Union member states, EGNOS satellites have now also been placed over the eastern Atlantic Ocean, the Indian Ocean, and the African mid-continent.

Other SBAS Services

More recently, other Satellite Based Augmentation System (SBAS)s have been, or are in the process of being developed to cover other parts of the world, including MSAS (Japan and parts of Asia), GAGAN (India), SDCM (Russia), SNAS (China), AFI (Africa) and SACCSA (South and Central America) (ESA 2014). Australia and New Zealand are in the process of developing an SBAS system that will provide several decimeter accuracy across Australia and its marine areas, and one decimeter accuracy across New Zealand. The system will provide three services to users – an L1 system with sub one-meter horizontal accuracy for aviation purposes; a Dual-Frequency Multi-Constellation (DFMC) with sub one-meter accuracies; and a Precise Point Position (PPP) service (see §2.6.6) with accuracies of 10-15 cm (Guan 2019). Testing is scheduled for completion in July 2020 (Geoscience Australia 2019a).

Accuracy of SBAS Services

A study in 2016 determined that, over most of the USA, the accuracy of Wide Area Augmentation System (WAAS)-enabled, single-frequency GPS units was on the order of 1.9 meters at least 95 per cent of the time (FAA 2017). This may be lower in other parts of the world where Satellite Based Augmentation System (SBAS) stations are less common. Note that as most SBAS satellites are geostationary, blocked line of sight towards the equator (southwards in the northern hemisphere, or northwards in the Southern hemisphere) by buildings or heavy canopy cover will reduce the accuracy of SBAS correction, Also, during solar storms, the accuracy deteriorates by a factor of around 2.

Despite early indications that WAAS can significantly improve positional accuracy during the most severe period of geomagnetic storms, more recent studies in the USA and Canada have shown that the sparseness of WAAS stations and ionospheric grids do not lead to a significant improvement. (Skone & Yousuf 2007). With reference stations needing to have separations within 100 km, improvements are only likely in coastal and near coastal areas of North America and Europe in the foreseeable future.

2.6.5. Ground-based Augmentation System

Ground Based Augmentation Systems (GBAS), also known as Local Area Augmentation Systems (LAAS), provide differential corrections and satellite integrity monitoring in conjunction with VHF radio, to link to GNSS receivers. A GBAS consists of several GNSS antennas placed at known locations with a central control system and a VHF radio transmitter. GBAS is limited in its coverage and is used mainly for specific applications that require high levels of accuracy, availability and integrity, and is the system largely used for airport navigation systems.

2.6.6. Precise Point Positioning

Precise Point Positioning (PPP) depends on GNSS satellite clock and orbit corrections, generated from a network of global reference stations to remove GNSS system error and provide a high level (decimeter) of positional accuracy. Once the corrections are calculated, they are delivered to the end user via satellite or over the Internet.

Although similar to Satellite Based Augmentation System (SBAS) systems (see above), they generally provide a greater accuracy and have the advantage of providing a single, global reference stream as opposed to the regional nature of an SBAS system. Whereas SBAS is free, the use of PPP usually incurs a charge to access the corrections, so it is unlikely that the increased accuracy of PPP when compared to that of SBAS, will be a consideration for most biological applications.

2.6.7. Static GPS

Static GPS uses high precision instruments and specialist techniques and is generally employed only by surveyors. Surveys conducted in Australia using these techniques reported accuracies in the centimeter range. These techniques are unlikely to be extensively used with biological record collection due to the cost and general lack of requirement for such precision.

2.6.8. Dual and Multi-Frequency GPS

High-end dual and multi-frequency GPS/GNSS devices can bring accuracy to the centimeter level, and even mm level over the long-term (GPS.gov 2017). One of the ways this is done is by removing one of the largest contributors to overall satellite error - error due to the ionosphere (known as ionosphere error) (Novatel 2015).

2.6.9. Smartphones

GPS-enabled smartphones are typically accurate to within 4.9 m (16 ft.) under open sky, however, their accuracy worsens near buildings, bridges, and trees (GPS.gov 2017). A study by Tomaštik et al. 2017 found that the accuracy of smartphones in open areas was around 2-4 m. This decreased to 3-11 m in deciduous forest without leaves, and 3-20 m in deciduous forest with leaves. There are reports that the accuracy in some GPS-enabled smartphones will soon be improved to <1 meter (Moore 2017) and that accuracy in areas with restricted satellite view within cities will be improved drastically with inbuilt 3D smartphone apps and probabilistic shadow matching (Iland et al. 2018). In general, the GNSS chipsets in smartphones are quite good, and any loss of accuracy is usually due to the quality of the antenna, whose chief failing is due to their poor multipath suppression (Pirazzi et al. 2017). In some smartphones where good satellite coverage is unavailable (e.g. in cities and forests), the phone may introduce errors from bias in its internal clock (Pirazzi et al. 2017), leading to occasional large inaccuracies (Arturo Ariño Oct 2019, pers. comm.). Already the technology for better than 1 meter smartphone accuracy exists, but it is not available to the public due to the difficulty and cost of incorporating the technology into small smartphones (Braun 2019). The accuracies reported in most publications refer to studies in the USA, Europe, coastal Australia, India or Japan where good differential stations are plentiful. More studies are needed to test smartphone accuracies in remote locations and where differential stations are not available.

Smartphone GPS technology is changing rapidly and there is likely to be new and updated information even before this document is published.

2.6.10. GPS-enabled Cameras

We are not aware of the characteristics of the accuracy of GPS-enabled cameras, but we expect the accuracy to be similar to that of smartphones. One study, using three different cameras, showed variation between the three and the true location to be less than 3 m from the reported location (Doty 2017).

2.6.11. Diver-towed Underwater GPS Receivers

2.7. Elevation

Supplement the locality description with elevation information if this can be easily obtained. Elevation can be determined from a variety of sources while in the field, including altimeters, maps (both digital and paper), and GPS/GNSS receivers, each with associated uncertainties. Elevation can be estimated after the fact using Digital Elevation Models at the coordinates of the location. In any case, record the method used to determine the elevation.

Elevation markings can narrow down the area in which you place a point. More often than not, however, they seem to create inconsistency. While elevation should not be ignored, it is important to realize that elevation was often measured inaccurately and/or imprecisely, especially early in the 20th century. One of the best uses of elevation in a locality description is to pinpoint a location along a road or river in a topographically complex area, especially when the rest of the locality description is vague.

When adding elevation after the fact be aware that the elevation can vary considerably over a small area (especially in steep terrain) and that the uncertainty of the georeference must be taken into account when determining the elevation. Do not use the coordinates on their own.

2.7.1. Altimeters

A barometric altimeter uses changes in air pressure as a proxy for changes in elevation, and can be a reliable source of elevation if properly calibrated. Calibration requires that the elevation of the altimeter be set to a known starting elevation, which could be determined from a map, for example. Thereafter, as the altimeter goes higher or lower in elevation, it estimates the new elevation directly from the air pressure it experiences. Since weather conditions can change the air pressure independently of changes in elevation, it is important to re-calibrate the altimeter frequently, either by recording the elevation when you stop moving and resetting to that same elevation before starting out again, and/or by recalibrating to known elevations whenever you encounter them.

In theory it would be possible to use a barometric altimeter to determine elevations when in a

2.7.2. Maps

Elevation can be determined using the contours and spot height information from a suitable scale map of the area. In general, the uncertainty in the elevation when read from a map is half the contour interval.

For information on determining accuracy from a map, see §3.4.2.1.

2.7.3. GPS

Elevation accuracy as reported from a GPS has improved markedly in recent years, but elevation accuracy is not usually reported by GPS/GNSS receivers. As a general rule, for most non-Satellite Based Augmentation System (SBAS) or Wide Area Augmentation System (WAAS) enabled GPS/GNSS receivers, elevation error is approximately 2-3 times the horizontal error (USGS 2017). It is hard to find definitive information for smartphones, but it would appear that this same multiplier is a good rule for those as well. With WAAS-enabled GPS, the FAA reports that 95 per cent of the time vertical error is less than 4 meters (FAA 2019). However, the elevation reported on the GPS receiver or smartphone is not necessarily referring to mean sea level (MSL) as reported, but to the zero elevation of the ellipsoid of the datum – see discussion below.

Note that GPS elevation readings can represent one of at least two different values, depending on the method used by the GPS. Elevation reported can be the geometric height. This is the only value that GPS devices can actually measure, and is the height based on the ellipsoid of the datum. The elevation reported can also be the elevation above MSL, or orthometric height. These values are not directly measured by the GPS, but are calculated as the difference between the geometric height (measured) and the geoid height. The geoid height depends on the geoid and the datum you are trying to compare it against. Thus, to understand the potential difference between elevations based on MSL and those based on the geometric model, the geometric model (datum) must be known. To calculate the potential error using WGS84 datum at a given geographic location, use the Geoid Height Calculator (UNAVCO 2020). For further discussion about these methods, consult Eos Positioning Systems 2018. For a good explanation of the differences between the geoid and mean sea level, we refer you to GISGeography 2019b.

2.7.4. Vertical Datums

In 2022, the USA will release a new geometric reference frame and geopotential vertical datum that will replace existing USA geometric vertical datums. Similarly, over the next five years, Australia will move to a new generation height reference frame – the Australian Gravimetric Quasigeoid 2017 (AGQG 2017) (McCubbine et al. 2019). The new reference frames will rely primarily on Global Navigation Satellite Systems (GNSS), as well as on an updated gravimetric geoid model (National Geodetic Survey 2018). The new method of calculating vertical datums will improve vertical accuracies to around 1-2 cm, will provide more accurate GPS-determined elevations (Ellingson 2017), and will allow for dynamic updating. Other jurisdictions are likely to move to new methods of calculating vertical datums over time, meaning that within five years most users will be able to position themselves vertically using mobile Global Navigation Satellite Systems (GNSS) technology with sub-decimeter accuracy (Brown et al. 2019).

2.7.5. Digital Elevation Models

Digital Elevation Models (DEM) are based on elevations above mean sea level (or more recently, the geoid). The models are calculated using sophisticated interpolations and do not necessarily correspond to the actual surface elevation. DEM vertical accuracy is influenced by several factors such as grid size, slope, land cover, and geolocation (horizontal) error, as well as other biases due to the original DEM data collection (e.g. satellite imaging geometry) and/or production method (Mukherjee et al. 2013, Mouratidis & Ampatzidis 2019). Global DEMs such as the Advanced Spaceborne Thermal Emission and Reflection Radiometer (ASTER) Global DEM V2 (Meyer 2011) and the Shuttle Radar Topography Mission (SRTM) are based on 1 arc-second grids (about 30 m x 30 m) (Farr et al. 2007) and have an accuracy of better than 17 m and 10 m respectively (except for in steep terrain such as mountains, and areas with very smooth sandy surfaces with low signal to noise ratio, such as the Sahara Desert (Farr et al. 2007)). Local and regional DEMs may have a smaller grid size. For example, a 5 m grid in Australia, which has a vertical accuracy better than one meter, and even to 0.3 meter in some areas (Geoscience Australia 2018) or the European Digital Elevation Model, which has an accuracy of better than three meters (Mouratidis & Ampatzidis 2019). Note also that satellite image-based DEMs, being radar based, vary greatly over different land surfaces, forests, shrub or herbaceous vegetation, agricultural areas, bare areas, rocky surfaces, wetlands, and artificial surfaces such as cities. Also the radar can penetrate into areas of snow, ice, and sand (as in deserts) (Mouratidis & Ampatzidis 2019).

2.7.6. Smartphones

Some smartphones, whether they incorporate GPS capabilities or not, use apps that provide elevation values based on a DEM. With smartphone GPS apps, be aware that some devices and apps incorrectly record the method used. The uncertainty in elevation due to an unknown elevation source can be up to 100 meters. For example, the difference with datum WGS84 between the ellipsoid and geoid or mean sea level methods of reporting elevation is shown in Figure 8. Note also that these uncertainties are in addition to the uncertainties associated with the measurements themselves. The only true way of determining what your GPS receiver or smartphone is recording is to test it against a known elevation. Some preliminary studies by the authors show elevation accuracy from smartphones varies greatly in different areas of the world. In areas in the USA, Europe, Australia, Japan, etc. (where most published results are from) errors are generally within 10 meters or so, but in more remote areas (such as on a remote island in Fiji), errors in the order of ±60 meters are not uncommon. Using two different mobile applications at sea level at one location resulted in reported elevations from −24 m to +58.9 m. These studies are preliminary and more research is needed in different areas of the world.

2.7.7. GPS-enabled Digital Cameras

GPS-enabled digital cameras are like smartphones with respect to positional accuracy as they have similar sized in-board antennas. To conserve battery life, most GPS-enabled digital cameras have options to set positional update intervals. Depending on the camera, these can range from once every second to once every five minutes. The setting of this interval may have significant implications with respect to both coordinates and uncertainty.

Underwater digital cameras only update their position when the diver or snorkeler takes the camera above the surface long enough for the GPS to fix its position.

2.7.8. Google Earth

Using a large sample size (n>20,000) of GPS benchmarks in a variety of terrains in the United States, Wang et al. 2017 found that elevations in the Google Earth terrain model had a boundary of error interval at 95 per cent (BE95) of ±44 m, with worst-case scenarios around 200 m. The same study found that Google Earth terrain model had a BE95 of ±6 m along highways. Though we find no data for elsewhere in the world at this time, we recommend using the values extracted from the work of Wang et al. 2017 as estimates of elevational uncertainty when the source is the Google Earth terrain model. A second study using Google Earth to determine elevation in three regions of Egypt (El-Ashmawy 2016) on flat, medium, and steep terrains concluded that elevation data is more accurate in flat areas or areas with small height difference, with an accuracy of approximately 1.85 m (RMSE) and an error range of less than 3.72 m (and in some findings less than 1 m). Increasing the difference in height leads to decrease in the obtained accuracy with the RMSE rising to 5.69 m in steep terrain.

2.8. Headings

Compass directions (also known as headings) can be rather ambiguous. North, for example, might be any direction between northwest and northeast if more specific information is not provided. There are several ways to avoid ambiguity when recording headings. One way is to qualify the direction with "due" (e.g. "due north") if the heading warrants it. A second way to avoid ambiguity is to use two orthogonal headings in locality descriptions, making implicit that both components are "due". Finally, ambiguity can be reduced if headings are given in degrees from north (0° is north, 90° is east, 180° is south, and 270° is west).

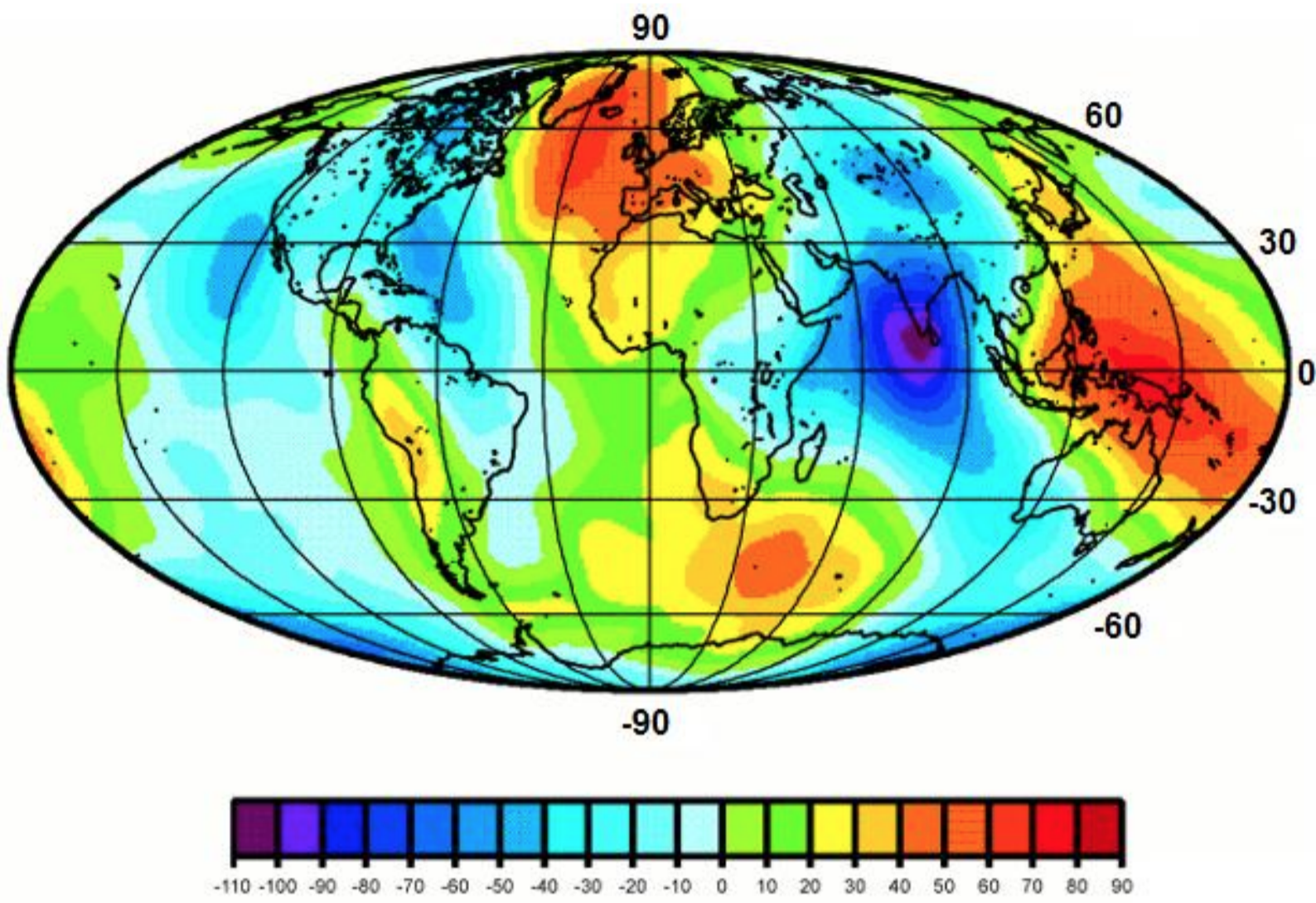

It is important to record headings based on True North (true heading) and not on Magnetic North (magnetic heading). The differences between True North and Magnetic North vary throughout the world, and in some places can vary greatly across a very small distance (NOAA 2019, NOAA/NCEI & CIRES 2019). For example, in an area about 250 km NW of Minneapolis in the United States, the anomalous magnetic declination (the difference between the declination caused by the Earth’s outer core and the declination at the surface) changes from 16.6° E to 12.0° W across a distance of just 6 km (Goulet 2001).

The differences between True North and Magnetic North also change over time (NOAA n.d.a). The National Oceanic and Atmospheric Administration (NOAA) has an online calculator that can calculate the anomalous or geomagnetic declination (adjustment needed to convert the magnetic reading to a reading based on True North) for any place on earth and at any point in time. If you need to make adjustments, we suggest that you use this calculator to determine the magnetic declination for the area in question. Otherwise determine your heading using a reliable map and always report your methods. Note that some smartphone apps will make that calculation for you, and allow you to set your app to record either Magnetic North or True North.

2.9. Offsets

An offset is a displacement from a reference point, named place, or other feature, and is generally accompanied by a direction (or heading, see §2.8). One of the best ways to describe a locality is with orthogonal offsets from a small, persistent, easy to locate feature (see §2.2). Using an offset at a very specific heading is a second option, though the uncertainty still grows with the offset distance. Offsets along a path are a third reasonable option for describing a locality, though they tend to be much harder to measure after the fact. Other locality types that use offsets (e.g. an offset direction without a distance, or an offset distance without a direction) tend to introduce excessive uncertainty and should be avoided.

2.9.1. Offset Distance Only

A locality consisting of an offset from a feature without a heading may arise as a result of an error when recording the locality in the field or through data entry. If the feature is small (such as a trig point) then the overall uncertainty will be largely due to the offset. With larger features (such as a town, or a lake), both the offset from, and the extent of the feature may contribute significantly to the overall uncertainty. The original collection catalogues or labels may contain information that can make the locality more specific. If not, a "Distance only" locality (e.g. "5 km from Lake Vättern, Sweden") might be envisioned as a band running around the reference feature at a distance given in the locality description. The problem is, we don’t know what was being used as the reference – some place in the lake, or some place on the edge — nor do we know if the offset was perpendicular to an edge or at some oblique angle to it. Because of these confounding factors, it is recommended to treat the locality as if it was a feature enlarged on all sides by the combination of all the sources of uncertainty (see Offset – Distance only in Georeferencing Quick Reference Guide (Zermoglio et al. 2020)).

2.9.2. Offset Direction Only

A locality with a heading from a feature, but with no distance (e.g. "East of Albuquerque"), is particularly ambiguous and very subjective to georeference. With no additional information to constrain the distance , there is no clear indication of how far one might have to go to reach the location – to the next nearest feature; the next nearest feature of equivalent size, to a place where there is a major change in biome (such as a coast), or just keep going?

Note that seldom is such locality information given alone. For example, the locality may have administrative geography information (e.g. ‘East of Albuquerque, Bernalillo County, New Mexico’). This gives you a stopping point (e.g. the county border), and should allow you to georeference the locality (see Offset – Heading only in Georeferencing Quick Reference Guide (Zermoglio et al. 2020)). In any case, it is highly recommended not to record locality descriptions in this way.

2.9.3. Offset at a Heading

A locality that contains an offset in a given direction to or from a feature is treated here as an "offset at a heading". There are several variations on such localities. One difficulty in determining a georeference for this type of locality description is knowing how the offset was determined – for example, by air, or along a path such as a road or river. Therefore, whenever a locality with an offset at a heading is described, be sure to be explicit about what is intended.

It is not uncommon for

Where the sense of the offset cannot be determined from the locality description or additional information and there is no obvious major path that can be followed in the rough direction and distance given, then it is best to assume the collector measured the distance by air. Whatever the decision, document the assumption in the georeference remarks (e.g. ‘Assumed "by air" – no roads E out of Yuma’, or ‘Assumed "by road" on Hwy. 80’) and georeference accordingly (see Offset – Distance at a Heading and Offset – Distance along a Path in Georeferencing Quick Reference Guide (Zermoglio et al. 2020)).

The addition of an adverbial modifier to the distance part of a locality description (such as "about 25 km"), while an honest observation, should not affect the determination of the geographic coordinates or the maximum uncertainty. Treat the uncertainty due to distance precision normally (see §3.4.6).

2.9.4. Offset along a Path

Sometimes it is convenient to describe a locality as a distance along a curvilinear feature — a path such as a road, river, trail, etc. (see Offset – Distance along a Path in Georeferencing Quick Reference Guide (Zermoglio et al. 2020)). One advantage of a description of this kind is that it avoids the uncertainty due to an imprecise heading. It might also be easy to register, such as when tracking distance with the odometer of a car while driving. However, a disadvantage is that it may not be quite as easy to determine the same location afterwards from maps alone during the georeferencing process. One reason is that you have to trace the facsimile of the path on a map. The map may have errors, loss of resolution due to map scale, inconsistencies with conditions at the time of the event, or might not even be present. There is also a difference between distance on the topographic surface and distance on a map, though for most normal situations (along roads and navigable waterways) the difference is <1% (see Offset – Distance along a Path in Georeferencing Quick Reference Guide (Zermoglio et al. 2020)). Worse, the path may have changed over time, making it even more difficult to find the exact locality retrospectively.

If the locality references a river, such as in the example "16 mi downstream from St Louis on the left bank of the Mississippi River", it is reasonable to assume that the offset is along the river. In this example, the locality is on the east side of the river, in Illinois, rather than on the west side, in Missouri, as the reference to "left bank" is conventionally taken to be in the orientation looking downstream.

2.9.5. Offset along Orthogonal Directions

This type of locality refers to rectilinear distances in two orthogonal directions from a feature, for example, "2 mi E and 1.5 mi N of Kandy" (see Offset – Distance along Orthogonal Directions in Georeferencing Quick Reference Guide (Zermoglio et al. 2020) and Figure 12). This way of describing a locality can be very effective, as it tends to remove one of the potentially largest sources of uncertainty, the ever-expanding uncertainty of direction with distance. Using orthogonal directions removes all directional uncertainty, as orthogonality implies directly in the orthogonal directions "by air". It is for this reason that this locality type is highly recommended for locality descriptions.

2.10. Water Depth

Water depth should be recorded as a range; i.e. as minimum and maximum positive distances in meters below the air-water interface of the water body (

2.10.1. Bathymetry

Since 2003, the most commonly used global coverage of bathymetry has been the One Minute General Bathymetric Chart of the Oceans (GEBCO 2019a), however, in 2019, a much finer, and more detailed, 15 arc-second grid coverage was released (GEBCO 2019b). The 3,732,480,000 grids (86,400 rows by 43,200 columns) cover from 89°59'52.5'' N, 179°59'52.5'' W to 89°59'52.5'' S, 179°59'52.5'' E, with elevation given for each pixel center. There are many criteria that determine the vertical accuracy of these grids, including the presence of steep canyons, water depth and turbidity (affects instrument penetration and acoustic beams get wider, the deeper they go), and methodology (satellite, single beam echo sounders (SES), multibeam echo sounders (MES), airborne laser (LADS), Light Detection and Ranging (LIDAR), etc.) (Wolf et al. 2019).

Bathymetric contours have generally only been available for harbors, coastal and near inshore areas, in some places extending to the edges of the continental slope. Where bathymetric contours (also called depth contours or isobaths) do exist, they are generally quite coarse (except in areas like the North Sea, and in harbors), and get wider apart as the depth increases. For example, the 2009 bathymetric contours for Australia are at 20 m, 40 m, 100 m, 200 m and 400 m. In some harbors, the contour interval is as small as one meter (Data.gov.au 2018). In 2019, the GEBCO_2019 global bathymetric contour dataset was derived from the GEBCO_2019 15 arc-second grid mentioned above. At large scales (1:5,000,000 and closer), the contour interval is 500 m; at medium scales (1:5,000,000 to 1:30,000,000) the contour interval is 1000 m; and at small scales (1:30,000,000 and greater), the contour interval is 2000 meters. Supplementary contours are shown in shallow waters (less than 500 m) (NCEI-NOAA 2019).